- Blog

- How to add plugin boris fx 10 to vegas

- Eviews autocorrelation

- How to get putty off a carpet

- Remove a game from recent activity steam

- Monect pc remote pc

- How to use pepakura designer

- Snark busters 3 high society download

- Zetetic astronomy parallax

- Ecm tools windows

- Hollywood movie a to z hindi dubbed

- Autocad 2013 64 bit keygen only

- Mmorpg gangster games

- Apple icloud storage plans rates

- Rainbow six siege redeem codes ps4 2017

I assumed the same would be true about water temperature. At first, I found this result surprising, because usually the air temperature on one day is highly correlated with the temperature the day before. The Pearson correlation coefficient has a value between -1 and 1, where 0 is no linear correlation, >0 is a positive correlation, and 0, which verifies that our data doesn’t have any autocorrelation. The Pearson correlation coefficient is a measure of the linear correlation between two variables.

I can calculate the autocorrelation with () function which returns the value of the Pearson correlation coefficient. For example, I can’t detect the presence of seasonality, which would yield high autocorrelation. H2O_df.plot(kind='line',x='time_step',y='h2O_temp')įrom looking at the plot above, it’s not obviously apparent whether or not our data will have any autocorrelation. H2O = client.query('SELECT mean("degrees") AS "h2O_temp" FROM "NOAA_water_database"."autogen"."h2o_temperature" GROUP BY time(12h) LIMIT 60') client = InfluxDBClient(host='localhost', port=8086) Next, I connect to the client, query my water temperature data, and plot it. import pandas as pdįrom import plot_pacfįrom import plot_acf

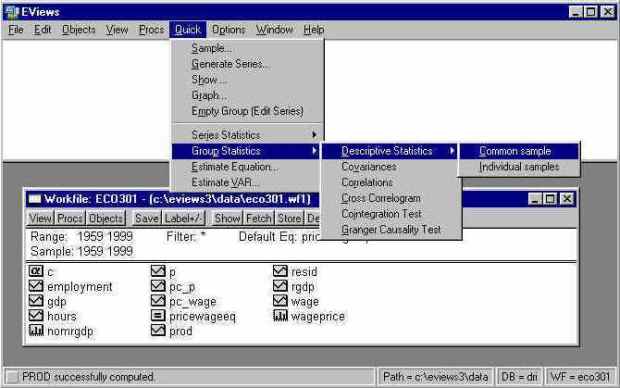

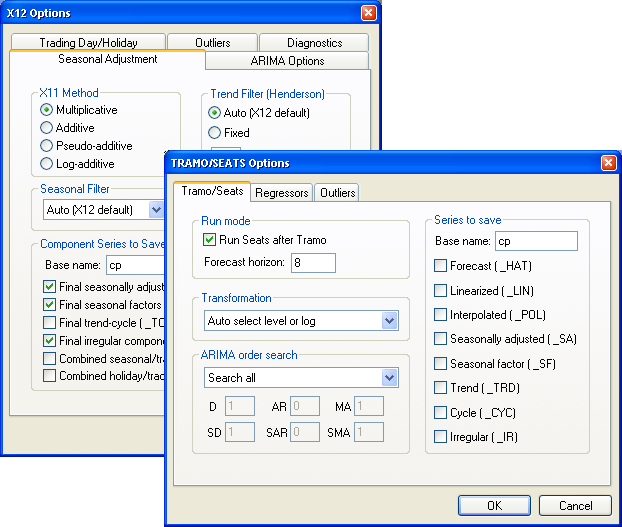

Eviews autocorrelation code#

This analysis and code is included in a jupyter notebook in this repo.įirst, I import all of my dependencies. Influx -import -path=NOAA_data.txt -precision=s -database=NOAA_water_database Specifically, I will be looking at the water levels and water temperatures of a river in Santa Monica. I am using available data from the National Oceanic and Atmospheric Administration’s (NOAA) Center for Operational Oceanographic Products and Services.

Eviews autocorrelation how to#

How to Determine if Your Time Series Data Has Autocorrelationįor this exercise, I’m using InfluxDB and the InfluxDB Python CL. Additionally, analyzing the autocorrelation function (ACF) and partial autocorrelation function (PACF) in conjunction is necessary for selecting the appropriate ARIMA model for your time series prediction. Specifically, we can use it to help identify seasonality and trend in our time series data. He goes further to explain how this misconception is the result of accuracy metrics failing due to the presence of autocorrelation.įinally, perhaps the most compelling aspect of autocorrelation analysis is how it can help us uncover hidden patterns in our data and help us select the correct forecasting methods. I highly recommend reading this article about How (not) to use Machine Learning for time series forecasting: Avoiding the pitfalls in which the author demonstrates how the increasingly popular LSTM (Long Short Term Memory) Network can appear to be an excellent univariate time series predictor, when in reality it’s just overfitting the data. This mistake can mislead people into believing that their model is a good fit when in fact it isn’t. People often use the residuals to assess whether their model is a good fit while ignoring that assumption that the residuals have no autocorrelation (or that the errors are independent and identically distributed or i.i.d). This can be frustrating because if you try to do a regression analysis on data with autocorrelation, then your analysis will be misleading.Īdditionally, some time series forecasting methods (specifically regression modeling) rely on the assumption that there isn’t any autocorrelation in the residuals (the difference between the fitted model and the data). However, one of the assumptions of regression analysis is that the data has no autocorrelation. Often, one of the first steps in any data analysis is performing regression analysis. By contrast, correlation is simply when two independent variables are linearly related. Specifically, autocorrelation is when a time series is linearly related to a lagged version of itself.

Eviews autocorrelation serial#

What Is Autocorrelation?Īutocorrelation is a type of serial dependence. However, this attribute of time series data violates one of the fundamental assumptions of many statistical analyses - that data is statistically independent.

Serial dependence occurs when the value of a datapoint at one time is statistically dependent on another datapoint in another time. The fact that time series data is ordered makes it unique in the data space because it often displays serial dependence. A time series is a series of data points indexed in time.

- Blog

- How to add plugin boris fx 10 to vegas

- Eviews autocorrelation

- How to get putty off a carpet

- Remove a game from recent activity steam

- Monect pc remote pc

- How to use pepakura designer

- Snark busters 3 high society download

- Zetetic astronomy parallax

- Ecm tools windows

- Hollywood movie a to z hindi dubbed

- Autocad 2013 64 bit keygen only

- Mmorpg gangster games

- Apple icloud storage plans rates

- Rainbow six siege redeem codes ps4 2017